Wasteful Computation:

The Case for Simpler Algorithms in Computer Art and Music

The following article was first published in ArtStyle Art & Culture International Magazine, Vol 17, pp. 25–46, March 2026, under the Creative Commons Attribution License (CC BY-NC-SA 4.0). Since it was submitted, in December 2025, new developments in AI technologies and policies have been incessantly reported. With some minor exceptions I have refrained from updating this article. But I would like to take the opportunity to mention that the unwise decision of USA and Israel to launch a war against Iran will have huge negative impacts on the global economy and energy market. This may also affect the further development of AI, which depends on affordable energy. Exactly how this will play out is hard to predict.

Abstract

Algorithmic and generative art has a history which runs parallel with the introduction of computers. The recent fascination with generative AI obscures its links to historical predecessors. Present discussions of whether something made by a computer can be art are in certain ways similar to the discussions that were raised when computer art and music were new, although the large-scale appropriation of prior artworks is a crucial difference. Critical reflection on AI in art is often focused on copyright issues and the changing roles of artists, but too often one of the most important aspects has been left out, namely the enormous energy consumption of the data centres that run current AI models. This, and other environmental consequences, puts large-scale AI on a direct collision course with any sustainability goals. Simpler, more efficient algorithms which do not rely on the appropriation of other artworks remains a promising alternative for digital art. A wide range of negative side-effects have been noticed as a consequence of the widespread adoption of AI, not limited to its use in art. Ranging from a decline in critical thinking and other cognitive and creative skills to hypothetical existential threats to humanity, these broader issues cannot be entirely separated from a debate about generative AI in an art context. A widespread adoption of generative AI also has cultural implications, such as potentially creating an echo chamber effect, as will be discussed primarily with examples from music. The main argument in this essay is that opaque, proprietary AI models are neither good for the environment, for culture in general, or for artists, who have much more to gain from writing simple code themselves.

Introduction

To understand the present fascination with AI art, it will be useful to look back to the origins of AI and to early computer art and music. The term artificial intelligence itself originates from a bold project proposal written in 1955. Signed by Claude E. Shannon, Marvin Minsky, N. Rochester, and Joseph McCarthy, the research project would involve ten scientists and last for two summer months of the next year. Setting an optimistic tone, they write:

The study is to proceed on the basis of the conjecture that every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it. An attempt will be made to find how to make machines use language, form abstractions and concepts, solve kinds of problems now reserved for humans, and improve themselves. We think that a significant advance can be made in one or more of these problems if a carefully selected group of scientists work on it together for a summer. (Minsky et al 2006)

Some of the problems mentioned in this project proposal include natural language processing, neuron nets, and the self-improvement of ”a truly intelligent machine.” They also elaborate on the role of randomness in creativity:

A fairly attractive and yet clearly incomplete conjecture is that the difference between creative thinking and unimaginative competent thinking lies in the injection of a [sic] some randomness. The randomness must be guided by intuition to be efficient. In other words, the educated guess or the hunch include controlled randomness in otherwise orderly thinking. (ibid)

If creativity were understood simply as the creation of something hitherto non-existing, then the random generation of images, text, or music scores would be enough for introducing novelty. Dada artists resorted to randomness as a means to escape subjectivity and rationality. However, when artists have relied on randomness, they have had to think about how to use it. Duchamp conceived a random procedure for composing his Erratum Musical, Cage consistently applied chance and indeterminacy to his compositions. Randomness may be used to select a pitch sequence or any number of musical parameters, but at some point a decision has to be made about how to apply it. Intuition thus continues to play an important role even when artists try to delegate their choices to chance or to the musicians who perform their music. Creating a sound file by drawing a random number for each sample would create a different output every time this was attempted, but each sound file would just sound like white noise. Therefore, pure randomness is usually combined with some selection or filtering strategy.

Generative AI also relies on randomness in the sense that repeated identical prompts yield different outputs. One way to think of these models is as high-dimensional probability distributions which are sampled to produce an output. There is a trade-off between reliability, which is achieved by making the models more deterministic, and divergence of output, which may be valuable when these systems are used for creative purposes where serendipitous discoveries are welcome (Ugander and Epstein 2024). A weakness of generative AI, which I will return to below, is that it may contribute to a cultural echo chamber of sorts which reinforces existing expressions instead of introducing novelty.

The Intelligence in the term AI certainly is a misnomer. Large language models (LLMs) are based on statistical prediction of what words are likely to follow next in a given context, which is very different from logical reasoning or an actual understanding of concepts. Moreover, tasks such as making artistic images or music do not call for intelligence in the same sense as playing chess or proving mathematical theorems does. This is another reason why the term artificial intelligence feels inappropriate in an artistic context. Machine creativity is sometimes proposed as an alternative label, which again is a misnomer unless one takes a very mechanistic view of creativity. The machine isn’t creative, programmers and users are. In case of reliance on external data, the original artist whose work has been appropriated is creative.

Unfortunately, semantic labels may influence our thinking more than they should. Spelling out the acronym AI reminds us to think of this technology as something it isn’t yet and might never become. In addition to that, the term AI has come to stand for too many things at once. I’ll try to be specific when possible and distinguish generative AI from machine learning and algorithmic art.

Before AI in public awareness became exclusively associated with things like deep learning and artificial neural networks, certain programming paradigms such as logical programming were proposed as a means to automate reasoning. The approach differs fundamentally from what is pursued today: the programmer writes programmes he or she is hopefully able to understand, it is not some opaque black box whose response to various inputs nobody is able to predict. Another crucial difference is that these earlier AI paradigms, by being smaller and simpler, drew much less power. More on that below.

In the following I will draw some connections to earlier algorithmic or generative art, then briefly discuss why and when computer-generated art and AI-art in particular deserve to be called art. The final sections address some serious issues related to the expanded use of AI on personal, cultural, and environmental levels. On a first reflection many of these issues seem to go beyond the scope of art, but artists who use AI need to realise that by doing so they act as a legitimising soft power. On the other hand, I do think there are much less problematic forms of generative art that relies on lightweight algorithms, which doesn’t lead to copyright infringements, and that returns control of the programming to the artist. Most examples will be drawn from music, but I will also discuss examples from visual art and generative text.

Historical precedents of algorithmic art

Early computer music pioneers from the late 1950’s on experimented with algorithmically generated music, at first by score generation and then increasingly also through digital sound synthesis. Algorithmic composition is grounded in formalisation of procedures which may be carried out with or without a computer, such as integral serialism, chance operations, or stochastic music (Essl 2007). Lejaren Hiller’s Illiac Suite from 1957 used the computer to produce a score for string quartet. The movements explore traditional tonal counterpoint and atonal stochastic composition. Herbert Brün, Gottfried Michael Koenig, Paul Berg, Pietro Grossi, and Iannis Xenakis among others began to use computers both for sound synthesis and as tools for composition. The limited computational power available enforced simple, efficient algorithms.

A few visual artists like Frieder Nake, Manfred Mohr, Vera Molnar, and Peter Struycken began to create images using the computer in the 1960’s. In various ways, they explored formalised or rule-based methods of image-making (Kane 2014, ch. 3). Algorithmic sound and image composition have in common that low-level decisions are handed over to the computer. Much of the earliest computer art and music is characterised by a conceptual clarity which became less pronounced as computer software became more interactive and easier to use. The early practitioners had to think hard about what they wanted to do and why.

Early computer music and computer generated images were nothing remarkable compared to what can be achieved today. Nevertheless, the mystique surrounding computers may have given some people the inaccurate impression that the computer had created the music or the image. Of course nothing could have been made without programming, carefully breaking down the problem and making the computer do as intended. In practice, it was a matter of automating tedious operations that a human could carry out, in principle, only less efficiently and at a slower pace. If anything resembling decision-making were asked of the computer, it would be done through common logical constructs acting on input data, or by pseudo-random number generators. Looked at that way, there is no essential role for the computer that could not have been carried out by a large team of human workers, toiling for an extended time on a trivial task which, when finally completed, would match the computer output.

Composing without a computer can follow an entirely intuitive path, or one may set up strict rules to follow slavishly – or break at any time. Algorithmic composition on the other hand requires formalisation and rules, which are easily translated into computer programmes. It would be wrong to assume that computer aided composition, or computer music as it used to be called, reduces the composer’s visionary and creative role. Consider the oeuvre of Xenakis, who early on resorted to computers to generate his stochastic compositions. Details as well as decisions about large scale form may be delegated to the computer programme, but it is the formalisation that matters; which musical parameters are controlled by what kind of statistical distribution. Xenakis may sound remotely similar to Varèse, but mostly his music sounds like none before him. I don’t think it is accurate to say that music made with generative AI sounds like nothing made before, since what it does is to simulate audio patterns that are similar to those it has been trained on.

Comparing today’s large generative AI models to the pioneers of computer music and art, there is first and foremost a difference of scale. Mainframe computers were quite large, but small in comparison to the endless server halls required for today’s generative AI. Style imitation has always been one strand of algorithmic composition, driven by the curiosity of musicologists and a few composers such as David Cope (Nierhaus 2010), but another motivation has been the open-ended exploration of new musical possibilities. Generative AI makes style imitation and style interpolation the default by the way these models have been grown. And while Cope worked on symbolic note data, generative AI now analyses the surface level of audio files.

Many of the current debates about human and machine ”creativity” were already taking place in the 1960’s in the context of cybernetics. Zaripov, a little known Russian cybernetician, argued that human creativity as applied to musical composition should be possible to model with computers, and then the next logical step would be to let computers anticipate the musical style of tomorrow’s composers (Zaripov and Russell 1969). Early computer experiments in musical style imitation typically relied on Markov chain modelling of note transition probabilities, or explicit rules for melodic motion and harmonic succession, or other stochastic models. Prediction of stylistic development is rarely, if at all, mentioned as something AI could assist with. It is a complicated topic. Not only does it depend on culturally acquired taste and arguably universal cognitive constraints, popularity seems more dependent on things going viral, which may have much more to do with platform algorithms and marketing efforts than any substantive qualities of the music or art itself.

Generative text practices have received less attention than generative music and art. One interesting example of a chat-bot can be found on Bjørn Magnhildøen’s web art project noemata.net. I have not been able to accurately date this project, but it appears to have been around since about 2009. Based on a Markov model, the simplistic chat-bot called Maria Futura Hel usually hooks on to the last word the user types in, producing incoherent rambling answers. Transcripts available on the site reveal that many users nevertheless keep chatting, asking who Maria is, even inviting her home. Maria regurgitates whatever input it receives, so when an interlocutor writes in English it may respond with a few words in English, but then usually lapses back to its default, Norwegian. Any attempt to make the chat-bot answer questions, such as who is behind nonemata.net, or who she is and where she lives, invariably yields nonsense. Maybe the recent availability of more cooperative chat-bots has attuned people who come to chat with Maria to expect a more polished and professional behaviour, instead of the profanities, non-sequiturs and word salads it serves.

Transcripts of all users’ interactions can be read on the noemata domain. The Maria Futura Hel project is like a social sculpture in perpetual creation, becoming what the people who come to ramble or ask questions make of it. Since Maria Futura Hel pre-dates current chat-bots by at least fifteen years it cannot have been made in reaction to them or as a critique of them. Nevertheless, its crudeness and the continuing willingness of users to interact with it still makes it relevant.

Today we hear of unfortunate users lured into the traps of LLMs that assure them that their favourite delusion is absolutely warranted. But already a much simpler system such as the renowned psychologist bot Eliza, conceived by Joseph Weizenbaum in the mid-1960’s, fooled users to engage as if with another human. Interactive computer programmes have a surface or user interface, as well as something that has been called a subface (Carvalhais 2022), a hidden layer which the user cannot directly observe, which determines how it responds to input. It may be hard to avoid applying theory of mind to conversational agents even though they are nothing but large computer programmes paired with even larger data bases.

Part of what makes AI art attractive to some, I assume, is the prospect of ”machine creativity,” or the automatic creation of something one could not have imagined or created oneself by simpler means. This same fascination was a driving force behind earlier experiments with cybernetics and autonomous systems which, seemingly, generated music on their own or with some minimal interaction. Gordon Mumma constructed electronic devices that would analyse the sounds in the room and generate responses; David Tudor created complicated analogue electronic networks; and later on Agostino Di Scipio created self-organising systems of computer-processed audio in an electroacoustic feedback loop. In these and other similar works feedback is an essential part (Sanfilippo and Valle 2013; Kollias 2018). There is usually some form of live input to the system, but it may not be easily controllable. Emergence and surprise are thus motivations for exploring these kinds of systems. Some of the current interest in generative AI may likewise have to do with its novelty factor, the surprise it still offers, although the typical use with a cycle of refined prompting in the hope of narrowing in on some desired output is very different from the open-ended experimentation and search for happy accidents prevalent in earlier cybernetic art.

If feedback in various forms is a crucial part of cybernetically inspired music, it is more problematic and directly harmful for generative AI. Deleterious effects have been noticed on LLMs retrained using its own previous output as part of its corpus. The more cycles of feedback, the more prone these models become to hallucinations and garbage output. With substantial portions of new web content being written or generated by AI, the likelihood of these models feeding back to themselves increases unless content is explicitly marked as AI-generated.

An interesting example of a feedback process said to involve AI is a track called maieta released by the Spanish artist Pau Monfort Monfort in 2023. Based on similar principles as Alvin Lucier’s I am Sitting in a Room, a field recording of a folk singer is processed using an AI-tool for noise reduction and vocal enhancement, incidentally a tool which, I suspect, uses machine learning and not generative AI. The noise reduction is applied to the original song recording, then again to the first processed version, and then again to this version and so on, fourteen times in all. As the process continues, the voice gradually turns more inhuman as it breaks up into a stuttering, warbling stream.

But is it really art?

Although the question whether or not AI-generated material can be art is, in my view, not the most pressing one I shall provide my take on it with reference to the institutional theory of art. Can something be art even if it has not been made by a human, or has been made by a non-human? One would have to accept that an AI model has agency for it to qualify as an artist. Admittedly, the question of authorship is less straightforward in AI-generated art than in traditional media such as painting. Partly, authorship may be attributed to all human creators of the corpus that has been devoured by the AI model, partly one might try to assign authorship to the AI model itself (although I would not), or to the programmers who have built it, and lastly there remains the agency of the user who prompts the AI model to conjure up new images or sounds. AI-generated art may involve appropriation of other artists’ material, although it doesn’t have to, but so does collages using reproductions of other art. Readymades also involve appropriation; the fountain, bottle rack, or snow shovel found by Duchamp were not of his design and production, nor were Warhol’s Campbell soup or Brillo boxes of his own design, and Sherrie Levine’s photos of famous paintings and art photography are simply reproductions. Apart from historical precedents, there is no self-evident answer to whether photography or field-recordings should merit copyright. Several photographers may each take virtually indistinguishable pictures of another artist’s mural painting. Should they all be granted copyright? If the idea of intellectual property entails some amount of originality, these examples demonstrate the paradoxes and impasses copyright invariably leads to. On the other hand, it may not make any difference if something proposed as an artwork is copyrightable or not as long as it is exhibited and accepted as art.

AI-generated art differs from outright plagiarism or appropriation such as that of Sherrie Levine when it actually brings forth sufficiently novel images or audio, although well-publicised court cases such as Disney v Midjourney (Hayden 2025) demonstrate that the limits of intellectual property are all too easily transgressed. Generative AI is best understood as a mashup-generator, a sophisticated collage producer that combines existing things but does not invent anything novel. As such, it may interpolate between styles, but is probably not going to be very successful in extrapolating to something not yet existing. This is a difficult argument to pursue because of the vagueness of concepts such as artistic novelty. Stylistic mélanges may arise from cultural encounters, such as has often happened in World Music which combines geographically remote regions’ traditional ethnic styles. Such hybridisations may qualify as novel and original even though one can still trace back elements to the traditions which were borrowed from.

Newspaper images, diagrams in scientific papers, or recordings of instrument demos are typically not intended as art. There are images that are not visual art, and audio that isn’t music, according to their intended use. Likewise, I see no reason to treat AI-generated images or audio as art, unless these images and audio files are intended as such and validated by an art institution. When an AI-generated painting is sold in an auction house1 or included in a gallery exhibition, this constitutes a validation as art. It says nothing of the aesthetic quality or long-term interest this has for the art world, except that it has passed the threshold of being accepted as art. A well-known weakness of the institutional theory of art is its circularity; art institutions are defined as those that exhibit art. If recognised galleries and museums accept AI art in a large scale, and if simultaneously a majority of people in the art world refuse to accept this, then the reputation of these institutions might suffer.

The Wrong Biennale of 2025 focuses on AI. It is a distributed biennale which mostly takes place on-line. For those who fear that curators have taken over the artist’s place in the limelight and role of setting the agenda, this biennale offers confirming examples. Curators may apply to set up their pavilion, suggest a theme, write a call for works or invite their own selection of artists. Although there are differences between the curators, a common attitude is a fascination with the the hitherto unexplored potential of AI combined with a mild scepticism, often urging artists to critique the ethics of AI in art. However, as often is the case when AI is criticised from an ethical perspective the scope of critique tends to be limited by the premise that at its root, AI may have enough of a positive impact on society that it is worth pursuing, or that its development is inevitable anyway, so it is best to explore it as responsibly as possible. I’m not denying that AI can be useful for certain tasks, such as rough and rapid translation or source separation of mixed audio, but I’d be unable to convincingly argue that AI in its current form is a net positive for society. The range of so-called ethical concerns that are usually taken into account, grave as they are, is typically limited to issues such as copyright infringement, the risk of making human work obsolete, illegitimate uses of deep fakes, adverse psychological and mental consequences for users, and a decline in critical thinking or creative skills. This is a long list of serious issues, but there is another one that too often is neglected: the energy consumption and material use in keeping large AI models running.

Power consumption

In 2022 the artist Jason M. Allen won the Colorado State Fair’s fine art competition with an entry generated using Midjourney titled Théâtre D’opéra Spatial, which he had unsuccessfully tried to get copyrighted. Although the legal deliberations have attracted most attention2, the fact that this image was generated only after 624 prompting attempts and some further Photoshop tweaking is even more striking. Not all users will have the patience to refine their prompts so many times, but those who impose stringent demands or have a clear vision of their goal may need to.

Training AI models is notoriously computationally expensive, writing a prompt and generating some output less so, but the output cost must be multiplied by the number of users and number of times they write a prompt. The point is that both the training phase and the usage of generative AI contribute to the energy demands.

While some improvements in efficiency are introduced, these models are applied to increasingly complex tasks. Text generation is the least demanding, followed by audio and still images, followed by video. Artists eager to be at the technological frontiers will be drawn to the most computationally intensive tasks, whatever the artistic merits.

Meanwhile, the awareness is spreading that computation is a physical process with real-world consequences. After all, one cannot ignore the frequent news about data centres being built, with ensuing price hikes on the electricity bills in the area, freshwater diverted for cooling, and noise pollution which harms those living in the vicinity (Howells and Larsen 2025). As energy demand goes up, all means to satisfy the need are on the table; renewables if available, otherwise nuclear plants may be built, or a return to gas and coal may be a last resort. Local residents near these data centres pay for everyone’s access to generative AI, and the current trend is for these centres to expand to new places. In that perspective, what is ethical about asking for wider access to the most advanced AI models?

Cloud computing doesn’t happen in the sky, or some abstract immaterial realm. AI models join the track record of crypto currencies and NFTs, which for a moment were promoted in the art world as a means to bypass the usual gate keepers. These technologies all have notoriously wasteful energy consumption as their main side-effect. The energy transition from fossil fuels to renewables would be challenging enough given constant energy demands, but with sharply rising demand from data centres this may not be possible. Consider some hard facts: In the US there were one thousand data centres in 2018 which consumed about 11 gigawatt of power; by March 2025 there were 5,426 data centres. Fossil fuel currently provides for 56 % of the electricity used to power these centres. Projections of future demand by 2030 are estimated at 130 GW, or close to 12 % of total US annual demand (Yañez-Barnuevo 2025). Proposed strategies to make data centres more sustainable are to focus on renewables and to build them with energy efficiency in mind. Anyway, insisting on renewables is an insufficient band-aid to a problem that isn’t only about power consumption. Some of these data centres are built on arable land and, as mentioned, they require lots of water for cooling. While the hardware wears out over time the data centres rapidly become obsolete and hard to re-purpose.

Not only are data centres huge power consumers, AI models also cause problems by accessing web sites and often bypassing attempts to block them (such as robots.txt instructions), not just scraping web pages once, but regularly to check for changes. These bots may also access web pages as a result of a user inquiry for which the bot collects updated information which may not be a part of its training material. Open software projects and many small websites consequently suffer the equivalent of DDoS attacks, raising costs from higher bandwidth demands (Edwards 2025). Website owners may try to block the bots by various means such as captchas, in turn causing frustration for the human visitor.

Negative effects on users

The environmental effects and power consumption may ultimately be the most serious consequences of a wide adoption of AI. But effects such as cognitive atrophy, delusions, and emotional attachment should not be neglected (Stein 2025). Already simpler technologies such as the pocket calculator has reduced the capacity for mental arithmetic (how many are able to do mental multiplication of a pair of two-digit numbers?), and GPS weakens the ability to locate oneself. General purpose LLMs not only promise to answer any imaginable question, the way they are set up to interact typically makes it impossible not to apply some form of personification to them. When the chat-bot refers to itself as ”I” and the user addresses it as ”you,” it is hard to avoid treating the conversational agent as some sort of person. Among the psychological consequences that have been reported are increasing loneliness. Some users withdraw from people and prefer the sycophantic, always pleasant chat-bot. And, as more people are sucked into their AI partnerships, there are less peers around to connect with. The adverse effects of overly accommodating chat-bots can be overcome by making them less friendly, but AI companies have to balance their interest of getting users to use their services more against avoiding negative side-effects among users that harm the company’s reputation. Skills such as writing clear prose and formulating a coherent argument require practice, which users who outsource these tasks to LLMs don’t get (Lee et al 2025). Search engines already have a negative impact on memory by making it unnecessary to memorise facts or trying to recall things which are easily looked up; this is only likely to get worse with widespread adoption of chat-bots.

A likely development is that there will emerge a class division between those who uncritically adopt AI and those who understand that their own cognitive capabilities are a valuable resource that demands practice and maintenance, and who know to use LLMs with caution and critically paying attention to the plausibility of their answers.

Similar consequences are to be expected from the use of generative AI for image or music creation. Although fewer cautionary tales have been told about artists who outsource their creativity to AI, it is reasonable to assume that some skills may atrophy or never develop in the first place. For useful perspective one could go back to earlier technologies, such as the use of MIDI sequencers in music production. Instead of having to practice and master an instrument, you can type in a list of notes in a MIDI editor or hardware sequencer. This technology doesn’t exactly replace musicians, because it turns out to be really hard to mimic their expressivity this way; instead it opens up new musical possibilities such as humanly impossible rhythmic precision or playing hundreds of notes per second in what is known as black MIDI (with a mechanical precursor in Conlon Nancarrow’s player piano studies). Although I do think all music makers would benefit from mastering an instrument, I don’t deny that many of those who don’t still make interesting music using computers, or sometimes with modular synthesizers – both of which may be set up as interactive interfaces where skills and practice comes to matter. Similarly, generative AI can be used to mimic real music production, which is its most well known use case in musical audio generation. It may be a convenient tool for arriving at songs that sound remarkably similar to already existing music, especially within popular genres with many items to use in the training corpus. Making a standard pop production today usually involves a large team of collaborators who put a significant amount of man-hours into the project. Speeding up this process, removing all tedious parts, replacing the considerable skill set needed with prompt writing, all this promises a further democratisation and increased efficiency of music production. Anyone can create these ready-to-release tracks just with a few well chosen words in the prompt. Music production has already been democratised by the availability of music software that anyone can install on their computer instead of having to book an expensive studio, and anyone can publish their music on-line instead of having to hope for a record label to pick them up. We are already drowning in music. Large quantities are produced, and most of it will barely get any listeners. Add generative AI to this, and it will become even more hopeless to search for outstanding contributions among all the mediocre slop. A regression to the mean appears likely; as the available training corpus for generative AI will inevitably reflect what is currently popular, or has been popular in the past, feeding this material into the models will reinforce similar kinds of expressions by making their imitation or tweaking more convincing than efforts to conjure up something of which the model has had little exposure in the training set. If indeed generative AI should turn out to cause such a regression to the mean, and if AI-generated material becomes an accepted part of culture, then listeners over time may be exposed to a narrower scope of expressions, which might limit their openness to artistic oddities. The diametrically opposite outcome is also possible: listeners might turn away with disgust from anything produced with generative AI and demand real living artists who can play instruments. Perhaps the most likely outcome is a mixture of both, with different segments endorsing AI or turning away from it.

If the recent settlement between the three major music labels Warner, Sony, and Universal and AI start-up Klay sets precedent and becomes the norm, the business model will be that artists opt in to having their material in the training set, and when subscription customers generate AI songs based on this material the artists will be compensated. Meagrely, I assume. A similar near future development is envisaged by Kollias (2021), in which streaming services offer content instantly tailored to the customer’s mood, where algorithmically curated playlists are replaced by mashups made out of increasingly smaller structural elements assembled in realtime. No doubt many would welcome such a development, which to me sounds like a recipe for keeping users docile and entertained while shattering the remains of a shared culture into customised filter bubbles, one for each individual.

I believe the way generative audio AI has been promoted from the start by companies like Suno and Udio is fundamentally flawed from a creative point of view, not to mention copyright issues and other problems. The idea to generate complete finished songs in one go removes the interactive process as well as any chance to either obtain or apply the expert music production skills experienced artists acquire. That is why I suspect that those artists who find any value at all in generative AI will use it only for select parts, like creating a vocal track if they’re not confident singers. Even so, quite a significant part of the creative process is outsourced.

If AI has any use in the creative process, I think it is best limited to the simplest, most routine tasks and to non-generative applications. For example, blind source separation or de-mixing has to do with machine learning, and as such is sometimes included within the AI-label. Splitting up a mix into separate instruments can be very valuable for educational purposes, or for remixing and remastering.

Maybe there is also a place for creative abuse of generative AI, if such a thing is possible. John Oswald’s Plunderphonics album can be described as the creative abuse of copyrighted material – incidentally also an apt description of generative AI. I’m not so sure what form such a creative abuse would take. It would not be any of the obvious things generative AI offers, such as making sophisticated collages, stylistic blends, or grafting new lyrics onto the wrong singer with a mismatched backing band, the equivalents of putting moustaches on Mona Lisa. All those things are expected uses. If it is hard to come up with examples of creative abuse which the user may engage in, it is because the AI companies themselves are the abusers, creative or destructive as may be. And if licensing becomes standard practice the training set is likely to shrink, resulting in a further homogenisation.

There are also indirect consequences of the wider adoption of generative AI. As already mentioned, one is the purported democratisation which threatens to flood our culture with AI generated music and images while pushing those artists aside who abstain from using AI. Another indirect consequence is that certain artists are wrongly met with the suspicion that they have relied on AI in their work. Those who produce digital images with that glossy finish and detailed, but slightly misplaced imagery associated with AI, or those who are able to release large catalogues of stylistically divergent music in short time may find themselves accused of using AI, whether or not that is the case.

General risks of AI

The threat that AI might make human artists obsolete derives from an assumption that only the end product matters; the digital image, the audio file, not the process behind it or the performance of a human on stage or behind an easel. But still a large segment of the audience cares about the artist as a person and idol.

Some of the worst outcomes as a result of an expanded adoption of AI in society have little to do with the use of generative AI in making art or music. Why, then, should artists care about potentially nefarious uses or negative consequences of how AI is used in other domains? The worst doomsday scenarios vividly prophetised by some researchers are associated with a breakthrough in artificial general intelligence, AGI, which surely could create even better and more convincing art, if such a breakthrough is possible at all. But according to the prophecies, if this scenario plays out we will not have any time to experience any artificial artistic breakthroughs because we’ll all be dead when superintelligence emerges, even though the details of how this would happen are left to the imagination. Personally, I’m not primarily concerned with this possibility. Some have argued that the prospects of AGI serve as yet another marketing ploy. At some level, it is reasonable that AI companies would like to exaggerate their products’ capabilities, and that researchers in AI security have an interest in depicting their research field as useful and based in plausible reasoning. However, the fact that someone stands to gain from making AGI seem attainable within the near future doesn’t in itself constitute an argument that it cannot happen.

But if, for a moment, one takes the threat of a breakthrough in AGI seriously, and with it a likely exponential intelligence explosion (Bostrom 2014), then I would argue that any endorsement of currently available forms of AI ultimately serves the interest of working towards such a breakthrough. There may be no consensus around the concept of general intelligence itself, and hence even less agreement on how or if its artificial variety could ever be achieved. For example, consciousness or free will may or may not be essential parts of it. In practice, it may not make much difference. If a machine acts as if it were conscious, as if it had free will, as if it were intelligent, then for all practical purposes it doesn’t matter if that is just a fiction. We are often willing to apply theory of mind to computers or computer programmes, even though we know these are only code running on hardware.

Nothing like real intelligence is required for an AI to begin to engage in troubling behaviour. Two cases in point are shut-down avoidance and self-replication. Several LLMs have been tested and given some task, with or without explicit demands to allow it to be shut down. Nonetheless, in some number of cases these models have evaded shut-down or created copies of their own source code.

There is another ground for doubting that exponential growth in AI’s capability to convincingly mimic or surpass human intelligence would go on for very long. It has to do with the limitation of any natural process of exponential growth (for a similar argument, see Heinberg (2025)). Sooner or later, if it is bacteria in a petri dish or the spread of a pandemic, there is no more space left to spread and the growth process stops. Depending on the state of the nutrients or other resources that sustained the growth, it may saturate in a sigmoidal shape, or if the resources are depleted and not renewable it will collapse. At some point, physical limitations will slow down and maybe reverse the current growth in AI capabilities and general level of usage. It may be through unaffordable electricity prices, unavailability of computer chips, insufficient availability of fresh water for cooling, but it might also happen through a decline in financing or a general disinterest and aversion towards AI. Personally, I have encountered far more musicians and artists who are sceptical or concerned about AI than those who endorse it as a part of their creative toolbox. The reasons may vary; some are worried of being made obsolete while others do not find the available AI services sophisticated enough yet.

Some are convinced that AI is presently hyped up and more or less forced upon users who would not use these services if they had to pay for them. According to a blogger by the pen name Ploum, AI doesn’t make things easier, instead it creates more problems and obstacles than it solves (Ploum 2025). Insofar as this is the case, AI doesn’t cause unemployment but creates more unnecessary work.

At least it is clear that in the USA, the GDP growth over 2025 can be attributed almost solely to AI. Chips designer Nvidia, OpenAI and the other big AI companies have been found to engage in circular trade, buying and selling to each other. The business model presupposes that these companies will generate massive profit within a few years. It seems likely that whatever free models there exist today will first be massively promoted as useful in replacing workers and increasing efficiency, then as a number of users are hooked on these services, the free access can be replaced by a monthly subscription at increasing rates, as long as customers are willing to pay. For these reasons there are rising concerns that the AI-driven market is in a state of a bubble ready to pop any moment (Zitron 2025). At least if one focuses on the American market that is an easy conclusion. Meanwhile, AI is also developed in China. Even if the American AI industry is currently widely overvalued, its complete crash would not wipe out AI as such, it would continue elsewhere.

If the bubble bursts the resulting financial crisis will be bad, and if by way of miracle it doesn’t, then automation will succeed in removing more jobs and economic inequality will continue to increase. These general trends do not seem to be immediately coupled to what goes on among artists and musicians, but I think we should recognise that the arts are also a small but not insignificant contributor, not least able to shape the perception of AI. What artists do either feeds into the general mythology of AI or contradicts it.

Conclusion

The technologies currently associated with the term generative AI are formidably inefficient. One reason is that generative AI models are trained on the surface level of audio or images instead of underlying structural features. In the case of audio, a digital recording of an orchestra contains orders of magnitude more data than a symbolic representation in the form of a score. The recording does not separate the musicians’ interpretation or the room acoustics from the symbolic level. Training the generative AI model on scores instead of recordings and then using appropriate sound synthesis techniques to render the audio would make the process much more efficient, as well as resolve the copyright problem in certain cases involving sufficiently old scores. Similarly, images could be rendered using techniques such as wire-frame models, texture mapping, and ray-tracing. This again introduces a separation between a symbolic or structural level and the surface level of the rendered image, because the input to a ray-tracing programme may be a simple text file. There are cases where one cannot easily separate the symbolic level from the concrete rendering of it, but, when it can be done, much stands to be gained in efficiency by adopting this practice instead of using AI models trained on the surface level.

Artists working with computational media have much to gain from engaging directly with programming themselves instead of relying on opaque black-box software. The programming doesn’t have to be terribly advanced, and the end product doesn’t need to have that flashy, polished character often associated with generative AI in order to become artistically interesting, as the chat-bot Maria Futura Hel illustrates. Elementary programming skills are in many ways more empowering than having access to the most sophisticated closed-source generative AI models. Relying on chat-bots for programming may be a fast way to get started, but it will hardly help with understanding what the generated code does, or how to fix faults. An interesting challenge for algorithmic composition and generative art in general is to create as complex results as possible with as little code as possible (Holopainen 2021). This goal stands in stark contrast to the drive to throw ever more compute and hardware at the task of generating more and more slop.

Artificial Intelligence, as commonly understood, is a misleading label for a special type of algorithm paired with huge data bases, substantial parts of which it is safe to assume have been obtained without asking permission. Without the proper algorithm the data would not be useful, and without the data the algorithm would not generate anything. The purported intelligence is not artificial, it is absent. With a cynical joke one may surmise that when one day AI actually overtakes humans in intelligence, it will happen because the human IQ by then has declined to an even lower level. Indeed, with extended outsourcing of cognitive and creative tasks to AI, a decline in these capabilities among frequent users should be expected.

Among the promises of AI is to democratise artistic creation by making the process accessible to everyone. But writing prompts is a crude means of creating music or images. There is no reason to view the difficulties associated with acquiring the necessary skills for crafting art without the aid of AI as some unnecessary stumbling block that must be removed. The learning process also refines ones ability to discern quality.

I don’t expect much good to come out of increased AI use. The current American market bubble might collapse, but there is no reason to expect it to wipe out the AI phenomenon as such, notably because of the parallel AI industry in China. As with many other technological advances that eventually have played a significant role in music and art, a strong incentive to develop AI has come from military applications. AI technology is applied in surveillance, repression, and war. Without those markets AI companies would have much greater difficulties to get financed. Culture is soft power, and using generative AI can easily slip into an implicit defence of whatever else AI comes to stand for. Technology is supposedly neutral and can be used for good or for ill, but in the case of generative AI which is commonly offered as proprietary software services owned by billionaires with agendas that don’t necessarily align with those of artist communities one should pause and consider what forces one is supporting. Perhaps the most dangerous misunderstanding is the techno-optimist assumption that AI will solve all problems, including those caused by it.

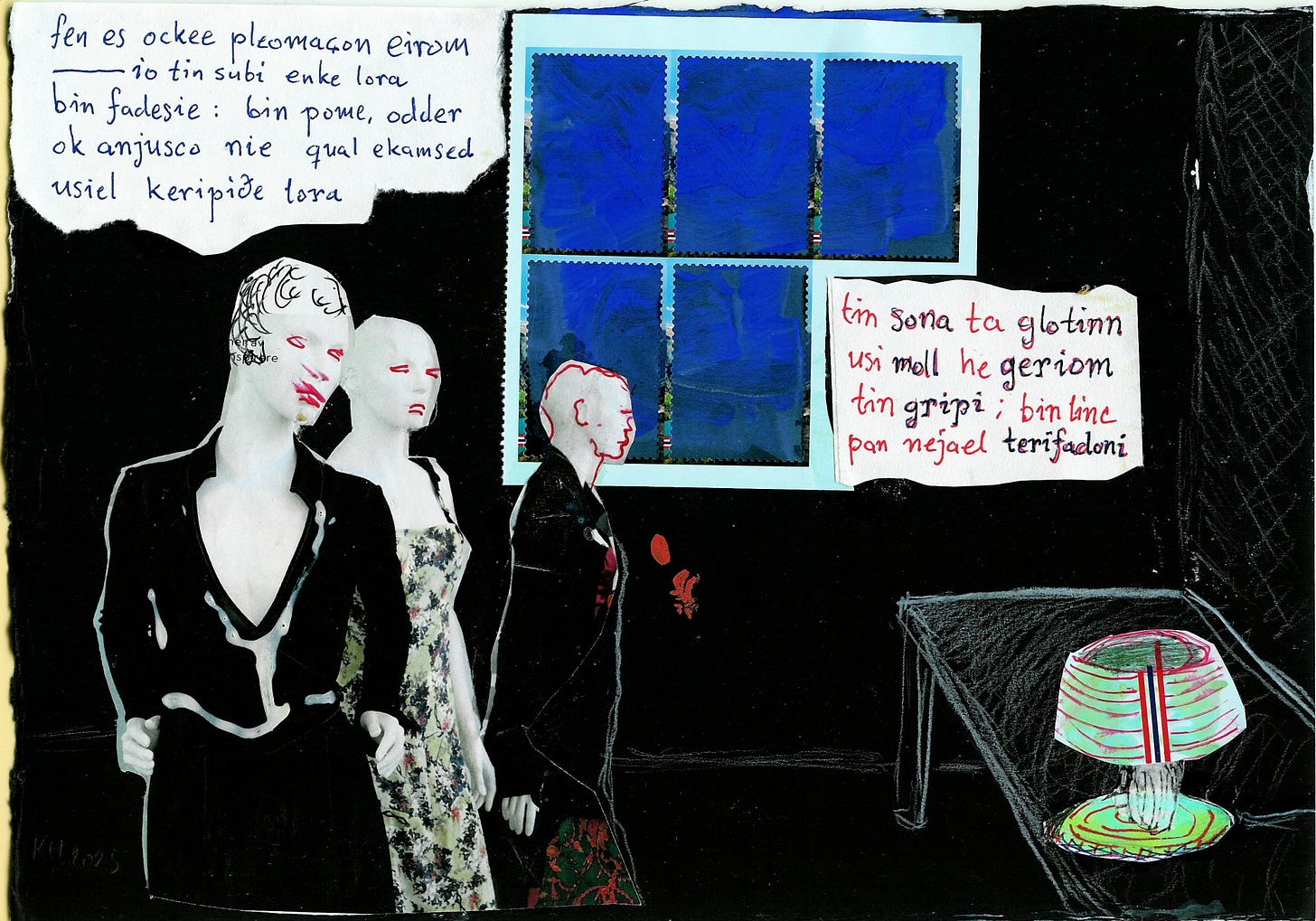

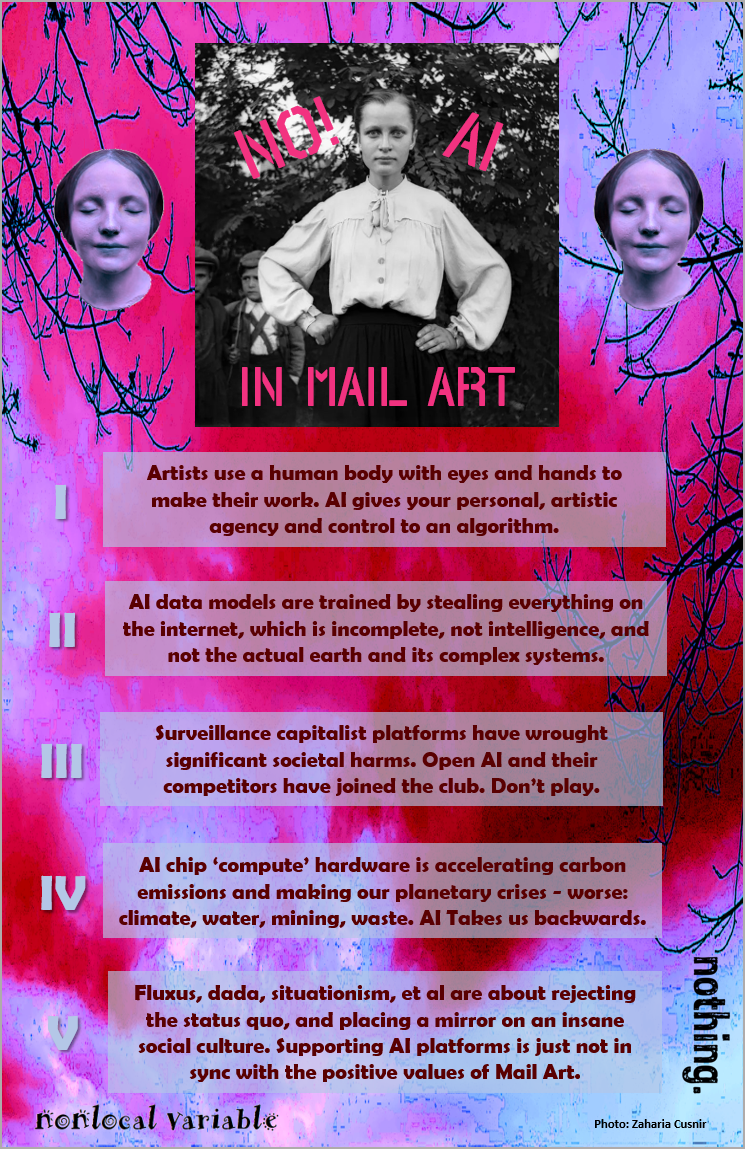

The reception among artists ranges from hostile to full embrace of AI. My guess concerning those who hold an unqualified enthusiastic view is that they are simply not well enough informed of the negative consequences, primarily concerning environmental and psychological effects. A mail artist known as Nonlocal Variable offers roughly the same critique as mine, only much more concisely (Fig. 1). One page of a mail art booklet is illustrated with a location where a data centre is built on farmland with the caption: ”If you use AI, you are eliminating arable land, consuming energy and water subsidized given to big tech, and boosting citizens’ power and water prices. Stop it, fight data centres, reject AI.” The previous page says: ”AI used by artists are doing interesting work. Sadly, that also means supporting a small group of literally insane neoliberal monarchist men in Silicon Valley.” This is a valid observation, but unfortunately still too few artists make this connection. The purported democratisation of art through means of corporately owned AI is an illusion. A real democratisation can happen by continuing in the spirit of Fluxus, recognising that anyone can be an artist, and there is no use for AI.

Bibliography

Bostrom, N. (2014). Superintelligence. Oxford University Press.

Carvalhais, M. (2022). Art and Computation. Rotterdam: V2_Publishing.

Edwards, B. (2025). Open Source Devs Say AI Crawlers Dominate Traffic, Forcing Blocks on Entire Countries. Ars Technica, March 25, 2025.

Essl, K. (2007). Algorithmic Composition. In Collins, N. and d’Escriván, J. (eds): The Cambridge Companion to Electronic Music, pp. 107–125. Cambridge University Press.

Hayden, E. (2025). Disney, Universal Launch AI Legal Battle, Sue Midjourney Over Copyright Claims. ARTnews, June 11, 2025.

Heinberg, R. (2025). MuseLetter #387: AI Utopia, AI Apocalypse, and AI Reality.

Holopainen, R. (2021). Making Complex Music with Simple Algorithms, is it Even Possible? Revista Vórtex, v.9, n.2.

Howells, D. and Larsen, T. (2025). Communities Pay the Price for ‘Free’ AI Tools. AI data centers produce massive noise pollution, use huge amounts of water, and keep us hooked on fossil fuels. Otherwords, Sept. 3, 2025.

Kane, C. (2014). Chromatic Algorithms. Synthetic Color, Computer Art, and Aesthetics after Code. The University of Chicago Press.

Kollias, P.-A. (2018). Overviewing a Field of Self-Organising Music Interfaces: Autonomous, Distributed, Environmentally Aware, Feedback Systems. 23rd ACM Conference on Intelligent User Interfaces, Intelligent Music Interfaces for Listening and Creation, Tokyo, Japan

Kollias, P.-A. (2021). Ambient Intelligence in Electroacoustic Music: Towards a Future of Self-Organising Music. Proceedings of the Electroacoustic Music Studies Network Conference, Leicester, UK

Lee, H.-P., Sarkar, A., Tankelevitch, L., Drosos, I., Rintel, S., Banks, R., and Wilson, N. (2025). The Impact of Generative AI on Critical Thinking: Self-Reported Reductions in Cognitive Effort and Confidence Effects From a Survey of Knowledge Workers. In CHI Conference on Human Factors in Computing Systems (CHI ’25), April 26–May 01, 2025, Yokohama, Japan.

Nierhaus, G. (2010). Algorithmic Composition. Paradigms of Automated Music Generation. Springer.

Ploum (2025). Quand éclatera la bulle IA…

Sanfilippo, D. and Valle, A. (2013). Feedback Systems: An Analytical Framework. Computer Music Journal, Vol. 37, No. 2, Summer 2013, pp. 12–27.

Minsky, M., Shannon, C, Rochester, N., and McCarthy, J. (1955). A Proposal for the Dartmouth Summer Research Project on Artificial Intelligence.

Stein, Z. (2025). AI’s Unseen Risks: How Artificial Intelligence Could Harm Future Generations. The Great Simplification. Podcast, host Nate Hagens.

Ugander, J., & Epstein, Z. (2024). The Art of Randomness: Sampling and Chance in the Age of Algorithmic Reproduction. Harvard Data Science Review, 6(4).

Yañez-Barnuevo, M. (2025). Data Center Energy Needs Could Upend Power Grids and Threaten the Climate. Environmental and Energy Study Institute, April 15, 2025.

Zaripov, R. Kh. and Russell, J. G. K. “Cybernetics and Music.” Perspectives of New Music, Vol. 7, No. 2, , pp. 115-154, Spring - Summer, 1969.

Zitron, E. “The Case Against Generative AI.” Where’s Your Ed At? Sep 29, 2025.

Apparently, the first AI artwork on sale through an auction house was a series of portraits of a fictional Belamy family by the group Obvious which went for $432,500 at Christies in 2018.

The Copyright Office’s decision reveals the details. More recently, and after this article was first submitted, the US Supreme Court has declined to review the rule that AI-generated art cannot be copyrighted.